On lobsters

The other day I was listening to an episode of Lenny's Podcast where the guest, Claire Vo, was brought in to talk about OpenClaw. Claire was introduced as someone who started off as a vocal skeptic of OpenClaw and now is running multiple lobster friends across three (!) Mac Minis.

Claire shared her screen and did a whole walkthrough of setting up OpenClaw and I thought the episode did a good job at bringing people up to speed with what's happening in this space (I've recently found out none of my friends outside of tech even know about OpenClaw).

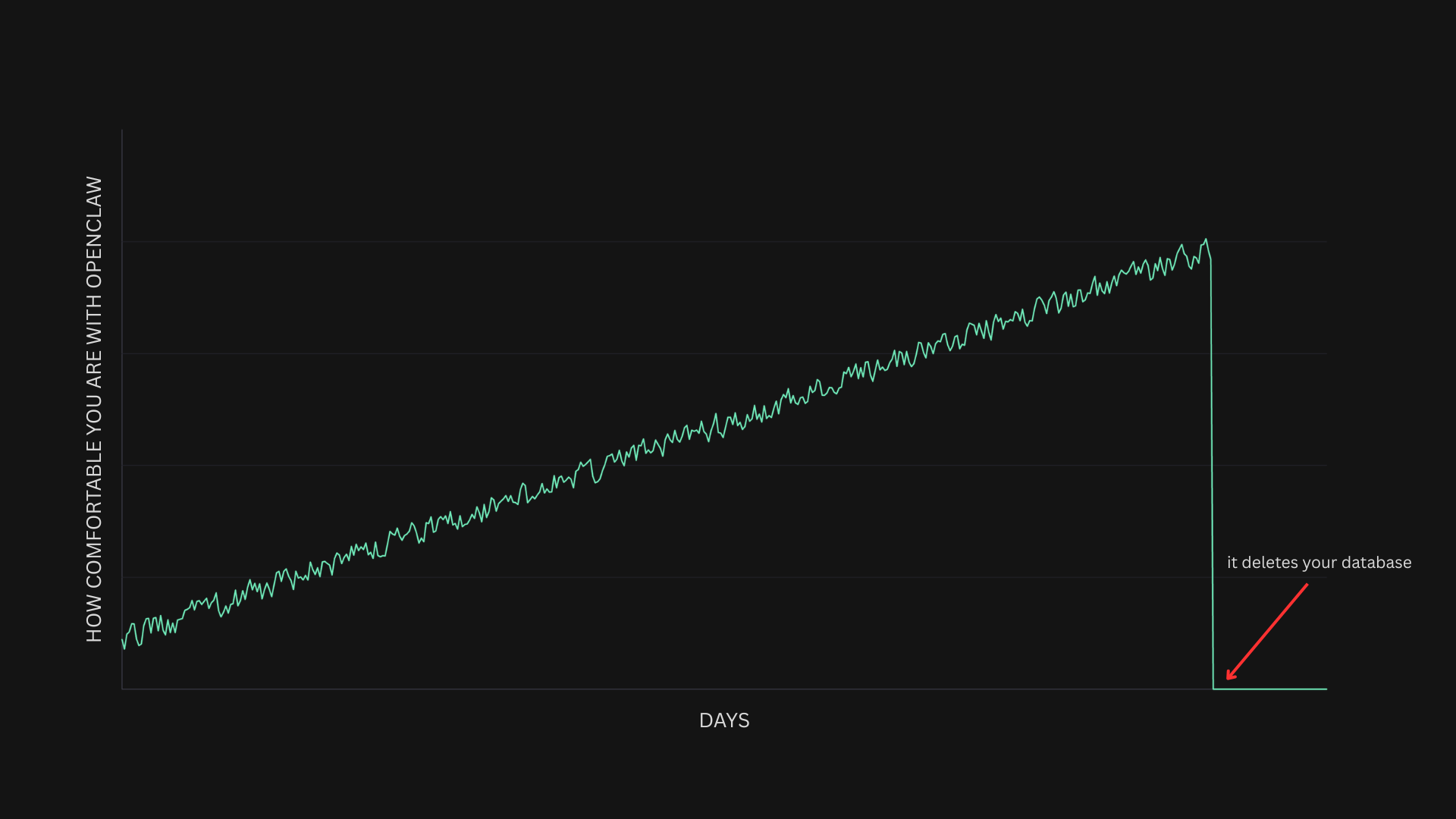

However, when asked about safety, Claire said something that really struck me. The advice given to people who are uneasy about safety with OpenClaw was basically to try it out while giving it limited permissions, then giving it more access as you get comfortable. This was called a "progressive trust process" and Claire said she feels "pretty comfortable about it now that I've used it more and more".

After a bit of back-and-forth, Lenny summarized the points she made: "So what I'm hearing is just, there are risks, start with not giving it access to things that you'd be afraid of it doing, and then as you experience it, you'll be like ok I can try this thing try that thing" [1].

Wait, what? That is not at all how security works.

Hearing this made me connect some dots about reflections I've had about our use of AI, and I think I came to the conclusion that be it with OpenClaw, another "claw", or just the industry in general, I think we might be living a bit of a turkey moment.

On turkeys

The concept of a turkey in the way I'm using here comes from Nassim Taleb, who talks about how learnings we derive from past experience can sometimes have no value in helping us predict what might happen next, and sometimes even negative value by blinding us to the possibility that certain events of large impact can happen.

Much like a Thanksgiving turkey will receive daily reinforcement that humans are friends as it's being fed, only to be very surprised (to say the least) a few days before Thanksgiving.

Taleb explains it best:

“Consider a turkey that is fed every day. Every single feeding will firm up the bird’s belief that it is the general rule of life to be fed every day by friendly members of the human race “looking out for its best interests,” as a politician would say. On the afternoon of the Wednesday before Thanksgiving, something unexpected will happen to the turkey. It will incur a revision of belief.”

Nassim Taleb (Black Swan)

So when I was hearing Claire's advice, all I could think about were turkeys.

Because if you follow the exact advice, not only are you opening yourself up to be surprised (like a turkey would) but if you give OpenClaw more access the more comfortable you get, you're not only increasing the surprise factor but also increasing the potential negative impact for when something goes wrong.

Let me give you an example.

Say you start using OpenClaw and just give it access to one calendar for it to manage. Each day you believe more and more that nothing can go wrong, but you don't give it any more access. Thus, when it deletes your calendar, your surprise is proportional to how much you'd let your guard down, but the impact is controlled, since it only had access to one calendar.

On the other hand, let's say that as you got more comfortable with OpenClaw you let it manage your personal email, then your drive, and then you gave it access to the production database. Now not only will you be really surprised when it drops a table or deletes the database, but suddenly the impact of the event was much larger than it would have been at the start. The surprise factor and the impact are both proportional to the pre-catastrophe trust/comfort level.

A really weird zoo

We've talked about lobsters, we've talked about turkeys, and now I want to add a brief note about swans.

If you've read Taleb's books, you'll be familiar with the concept of a black swan, which in really simple terms is an unpredictable event of extreme impact.

Taleb argues that we we humans are terrible at estimating the likelihood of a black swan event and thus they're a big shock (in terms of surprise and impact) when they do happen.

However, what I'm talking about here is not even a black swan event.

There are many areas in life where our discomfort comes from a lack of understanding, and where increasing your exposure as comfort increases is valid practical advice.

You climb more ambitious routes as you become more comfortable that the climbing equipment will catch you, but you're still subject to a black swan event (unpredictable, high impact) with the equipment. You could e.g. break a leg due to the failure of a carabiner that had an invisible issue from manufacturing [2].

In this case though, the risks are not just unpredictable. A lot of them are very predictable. We know for a fact that hallucinations are a thing, and we know for a fact that prompt injection is a thing.

That means that giving more access to OpenClaw just because you're more comfortable is like continuing to drink expired milk just because you haven't gotten sick yet. The failure modes are known, so what we're really doing is gambling.

Why are you such a hater?

I think some people might read this post and assume I'm just dunking on Claire, or being anti-OpenClaw, but I'd like to make it clear that neither of these is true.

In the podcast, Claire was clear about the prompt injection risks, and she also offered a good mental model for dealing with "claws" that I agree with, which is to treat it like you would treat an assistant. That means giving it its own email and its own calendar, and limited access to your own systems. Claire did not come across as oblivious to the risks.

As for OpenClaw, I'm far from a hater! I actually do think that it is a fascinating project that highlighted a lot of interesting use cases for LLMs. I like seeing people play around with it and build on top of it.

And when it comes to me, I'm no AI-skeptic. I use AI daily (never for writing though) and have been playing around with claws too.

But the reason for writing this post is that it became clear to me that the advice given was a fallacy, and it actually got me thinking that I'm falling for the very same fallacy myself at times. I'm certainly getting more comfortable with AI and taking more risks as a result. So what I'm talking about here is not a Claire thing. It's a me thing. It's an industry thing.

We're still early and there's a lot to learn. We're all still figuring this out and I think it's important to have open discussions about the good, the bad, and the ugly parts of the technology, which is all I'm trying to do here.

I think claws and autonomous agents are really powerful and useful, but I think they get more powerful once we have solid security primitives in place. That's where trust should come from in my opinion.

There will always be things we can't predict. There's no big company out there that hasn't gone through outages and data breaches. But there are ways to reduce risk.

On my end, as I've mentioned, I'm subject to everything I wrote about here, but I'm trying more and more to internalize the principles I described, and building tooling with that in mind.

Conductor is all the rave these days and it's really cool software, but it runs on your machine with no sandboxing, so I built my own orchestrator that was designed to run on a VPS (I might open source it soon).

I've also been building AgentPort, a self-hostable gateway for connecting to third-party services with granular permissioning. Basically a layer that sits between your agent and all your integrations and controls what the agent can do without approval, what it can't ever do, and what it can do provided you approve it each time e.g. it can look up customers and bills on Stripe but issuing a refund requires approval from you.

If any of this is interesting to you, let me know and let's connect. I'm really keen to hear about people's perspectives and what you're building in this space.

[1] If you want to listen this discussion yourself, it happens at around minute 22 of the episode "From skeptic to true believer: how OpenClaw changed my life" of Lenny's Podcast.

[2] There are still ways to prepare against the unpredictable, which is part of the point Taleb makes in his books. Also note that I focused my analogy around climbing equipment specifically because most climbing accidents happen due to human error which is predictable and addressable.